Science & Technology

July 19, 2017 · 7 comments

7 comments

Could we end up in a world dominated by sentient machines ? Image Credit: CC BY 2.0 Dick Thomas Johnson

During a recent Senate Armed Services Committee hearing, US General Paul Selva expressed how important it was to maintain human control over systems capable of killing other people "lest we unleash on humanity a set of robots that we don't know how to control."

"I don't think it's reasonable for us to put robots in charge of whether or not we take a human life," he said. "There will be a raucous debate in the department about whether or not we take humans out of the decision to take lethal action."

It's a concern that has been brought up many times by SpaceX CEO Elon Musk who has warned that intelligent machines represent a "fundamental risk to the existence of civilization."

"I have access to the very most cutting edge AI, and I think people should be really concerned about it," he said. "AI is a rare case where I think we need to be proactive in regulation instead of reactive."

"Because I think by the time we are reactive in AI regulation, it's too late."

Source: IB Times | Comments (7)

Top US general warns against killer robots

By T.K. RandallJuly 19, 2017 ·

7 comments

7 comments

Could we end up in a world dominated by sentient machines ? Image Credit: CC BY 2.0 Dick Thomas Johnson

General Paul Selva has spoken out about the dangers of creating fully autonomous weapon systems.

The risks of giving autonomy to so-called 'killer machines' have been highlighted time and again over the years in movies such as 'The Terminator' and 'The Matrix', but could an intelligent computer ever truly decide that humanity is a threat and attempt to wipe us off the planet ?During a recent Senate Armed Services Committee hearing, US General Paul Selva expressed how important it was to maintain human control over systems capable of killing other people "lest we unleash on humanity a set of robots that we don't know how to control."

"I don't think it's reasonable for us to put robots in charge of whether or not we take a human life," he said. "There will be a raucous debate in the department about whether or not we take humans out of the decision to take lethal action."

"I have access to the very most cutting edge AI, and I think people should be really concerned about it," he said. "AI is a rare case where I think we need to be proactive in regulation instead of reactive."

"Because I think by the time we are reactive in AI regulation, it's too late."

Source: IB Times | Comments (7)

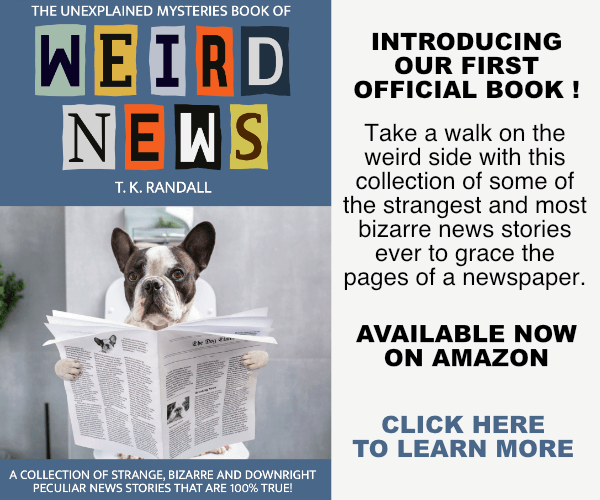

The Unexplained Mysteries

Book of Weird News

AVAILABLE NOW

Take a walk on the weird side with this compilation of some of the weirdest stories ever to grace the pages of a newspaper.

Click here to learn more

Support us on Patreon

BONUS CONTENTFor less than the cost of a cup of coffee, you can gain access to a wide range of exclusive perks including our popular 'Lost Ghost Stories' series.

Click here to learn more

Ancient Mysteries and Alternative History

Extraterrestrial Life and The UFO Phenomenon

UK and Europe

Unexplained TV, Books, Film and Radio

Total Posts: 7,779,942 Topics: 325,660 Members: 203,950

Not a member yet ? Click here to join - registration is free and only takes a moment!

Not a member yet ? Click here to join - registration is free and only takes a moment!

Please Login or Register to post a comment.